Cloud usage is growing fast, but so are the challenges that come with it. According to the Flexera 2024 State of the Cloud Report, 89% of organizations now use multiple cloud providers. At the same time, 81% of businesses struggle with managing their security and cloud governance. Without strong governance, organizations risk a lot of security breaches, compliance failures, and unexpected costs at the same time. One example of poor cloud governance is the Capital One data breach in 2019, where a misconfigured AWS S3 bucket exposed over 100 million customer records. Misconfigurations like this are among the top causes of cloud security failures. They happen because policies are either missing or not enforced properly. This is where compliance audits come in. By defining governance policies in code, organizations can automate security checks, prevent misconfigurations, and ensure compliance in AWS environments.

What is Cloud Governance?

Cloud governance is the set of rules and controls that organizations use to manage cloud resources efficiently. These rules ensure that every resource follows security, compliance, and operational standards. Without governance, AWS environments can quickly become disorganized, and non-compliant.

Risks of Poor Cloud Governance

Without a governance framework, misconfigurations and security gaps can easily go unnoticed by your teammates. Some common risks include:

- Overly Permissive IAM Policies – Users and services may have more access than necessary, increasing security risks.

- Uncycd Storage – Publicly accessible S3 buckets or unencrypted EBS volumes may expose sensitive data.

- Weak Network Security – Poorly configured security groups and VPC rules can expose cloud resources to the internet.

- Compliance Failures – Without continuous monitoring, resources may drift away from industry standards like CIS, NIST, PCI-DSS, and ISO 27001.

Governance gaps often go unnoticed until an audit or a breach occurs. A single misconfiguration can lead to a major security incident, making continuous enforcement essential.

The Role of Compliance Audits in Enforcing Governance Standards

A compliance audit is a structured process to check if cloud environments follow security and regulatory policies. These audits help organizations:

- Detect Misconfigurations Early – Regular checks help identify security risks before they are exploited.

- Ensure Compliance with Industry Standards – Frameworks like SOC 2, HIPAA, and GDPR require strict security policies. Compliance audits verify adherence.

- Prevent Configuration Drift – Infrastructure may change over time. Audits ensure that cloud resources stay aligned with security baselines defined by the organization.

Manual audits require security teams to inspect cloud configurations at regular intervals, comparing them against compliance standards. This process often leads to inconsistencies, as different teams may interpret policies differently or miss configuration drifts. Terraform eliminates these inconsistencies by automating compliance checks, enforcing security baselines, and ensuring that infrastructure remains aligned with governance policies across all deployments.

Defining Cloud Governance Policies with Terraform

Cloud environments require well-defined governance policies to maintain security, enforce compliance, and prevent misconfigurations. Without strict policy enforcement, AWS environments can become fragmented, introducing security risks and operational inconsistencies. Terraform provides a way to codify governance policies, ensuring they are consistently applied across all infrastructure deployments. Governance policies in AWS typically focus on identity and access management (IAM), storage security, and network security. Each of these domains plays a crucial role in enforcing security best practices. Let’s examine how Terraform helps implement and enforce these governance policies at scale.

IAM Policies: Enforcing Least Privilege Access

IAM is the foundation of cloud security, controlling who can access resources and what actions they can perform. Over-permissioned IAM policies are a major security risk, often leading to privilege escalation and unauthorized data access. Enforcing the principle of least privilege (PoLP) ensures that identities only have the minimal permissions required to function. Terraform allows organizations to define IAM policies programmatically, ensuring uniform enforcement across environments. A common security requirement is restricting access to S3 buckets by assigning read-only permissions to a specific IAM role.

| Effect = “Allow” Action = [“s3:GetObject”] Resource = “arn:aws:s3:::my-cyc-bucket/*” |

By defining IAM policies as code, Terraform eliminates the risks associated with manual misconfiguration and overly permissive access controls. This approach makes sure that the IAM best practices are consistently enforced across all AWS accounts.

Storage Governance: Securing S3 and Enforcing Encryption

Misconfigured storage is one of the leading causes of data breaches in the cloud. Publicly accessible S3 buckets, unencrypted storage, and missing logging mechanisms can expose sensitive information. Terraform allows organizations to enforce storage security policies at the infrastructure level. S3 bucket policies can be configured to block public access, ensuring that no accidental exposure occurs. Encryption can also be enforced to protect data at rest, ensuring compliance with CIS, PCI-DSS, and NIST standards.

| block_public_acls = true block_public_policy = true restrict_public_buckets = true |

By integrating these storage policies into Terraform modules, security teams can standardize encryption and access policies across multiple environments, eliminating manual errors and enforcing compliance from the start.

Network Governance: Restricting Traffic and Enforcing VPC Security

Unrestricted network access poses a significant security threat in AWS environments. Misconfigured security groups, network ACLs (NACLs), and VPC peering rules can expose resources to the public internet, increasing the attack surface. Terraform enables teams to define security group rules as part of infrastructure deployments, ensuring that only authorized traffic flows into cloud workloads. If an organization wants to allow SSH access only from a trusted IP address, this can be enforced at deployment.

| from_port = 22 to_port = 22 protocol = “tcp” cidr_blocks = [“203.0.113.0/32”] |

By defining network policies in Terraform, organizations prevent open security groups, enforce zero-trust network segmentation, and eliminate the risk of accidental exposure of some important services.

Scaling Governance Policies with Terraform

Manually managing security policies across multiple AWS accounts and environments introduces inconsistency and risk. Terraform provides a way to standardize governance policies, ensuring that security configurations remain declarative, version-controlled, and reproducible. By integrating Terraform with CI/CD pipelines, organizations can enforce security policies before deployment, preventing misconfigurations from ever reaching production. Terraform can also be used with AWS Config to detect configuration drift, alerting teams when resources deviate from the approved governance baseline. Effective governance requires continuous enforcement, real-time monitoring, and automated remediation. By using Terraform to manage IAM, storage, and network policies, organizations can proactively cyc their AWS environments, enforce compliance at scale, and eliminate any kind of security gaps within the infra.

Automating Compliance Audits Using Terraform

Manually auditing cloud environments for compliance is inefficient, prone to errors, and difficult to scale. Security teams often rely on periodic reviews to identify misconfigurations, but by the time these audits are completed, the infrastructure may have already drifted away from compliance standards. Terraform addresses this challenge by automating compliance audits, ensuring that governance policies are continuously enforced and deviations are detected early. Compliance frameworks like CIS, NIST, and PCI-DSS provide security benchmarks that organizations must follow to cyc their cloud environments. Terraform enables teams to define these governance policies as code and validate infrastructure configurations against them. By integrating Terraform with AWS services like AWS Config, organizations can automatically detect and remediate non-compliant resources, reducing the risk of security incidents.

Defining Compliance Checks in Terraform

The first step in automating audits is defining compliance baselines in Terraform. These baselines outline the required configurations for cloud resources, ensuring that security policies are applied consistently across deployments. For example, a compliance rule might state that all S3 buckets must be encrypted and block public access by default. Terraform can enforce this by defining security policies at the infrastructure level.

| block_public_acls = true block_public_policy = true restrict_public_buckets = true |

By applying these policies at deployment, Terraform prevents security misconfigurations before they happen, ensuring that infrastructure always meets compliance requirements.

Terraform’s Declarative Approach to Governance Audits

Terraform follows a declarative model, meaning infrastructure is defined in code and any deviations from the expected state are flagged. This makes it easier to enforce governance policies, as Terraform continuously compares the actual infrastructure state with the desired configuration. With the Terraform plan, teams can preview infrastructure changes before applying them. If a change violates compliance policies – such as enabling public access on an S3 bucket – Terraform will flag it, preventing accidental misconfigurations. This approach acts as an automated security check, ensuring that governance rules are followed throughout the infrastructure lifecycle.

Integrating AWS Config with Terraform for Continuous Monitoring

While Terraform helps enforce governance at deployment, AWS Config ensures that compliance is maintained over time. AWS Config continuously monitors AWS resources and detects any drift from security baselines. When integrated with Terraform, AWS Config can automatically trigger alerts or remediation actions when a resource becomes non-compliant. For example, if an S3 bucket’s encryption setting is disabled, AWS Config can detect the change, and Terraform can be used to automatically reapply the correct security settings.

| resource “aws_config_config_rule” “s3_encryption_check” { name = “s3-encryption-check” source { owner = “AWS” source_identifier = “S3_BUCKET_SERVER_SIDE_ENCRYPTION_ENABLED” } } |

By using AWS Config alongside Terraform, organizations can maintain compliance across AWS environments, detecting and correcting misconfigurations in real time.

Generating Governance Compliance Reports with Terraform

Governance audits often require detailed reporting to demonstrate compliance with regulatory frameworks. Terraform outputs can be used to generate compliance reports, providing visibility into security controls and infrastructure state. Terraform can output a list of non-compliant resources, allowing security teams to track violations and remediate them efficiently.

| output “non_compliant_resources” { value = aws_config_config_rule.s3_encryption_check.arn } |

These reports can be integrated into security dashboards, helping organizations maintain transparency and track compliance trends over time.

Proactive Compliance Auditing with Terraform

By automating compliance checks with Terraform, organizations move from a reactive to a proactive approach in cloud governance. Instead of waiting for audits to uncover security gaps, Terraform ensures that compliance policies are enforced before deployment and continuously monitored after deployment. With a combination of policy-as-code, AWS Config, and automated reporting, Terraform enables organizations to maintain a cyc, compliant, and well-governed AWS environment at scale.

Hands-On: Implementing Cloud Governance with Terraform

Governance policies ensure that cloud environments remain cyc, compliant, and well-structured. However, defining policies alone is not enough – these rules must be enforced at every stage of infrastructure deployment. Terraform enables organizations to implement governance controls as code, ensuring that security policies are applied consistently across all AWS environments. This section focuses on setting up Terraform for governance enforcement, applying IAM policies to restrict access, securing S3 storage, enforcing network security rules, integrating AWS Config for compliance monitoring, and generating governance audit reports. By the end of this implementation, Terraform will automate governance policies, detect any deviation from security baselines, and ensure that AWS resources remain compliant.

Setting Up Terraform for Governance Enforcement

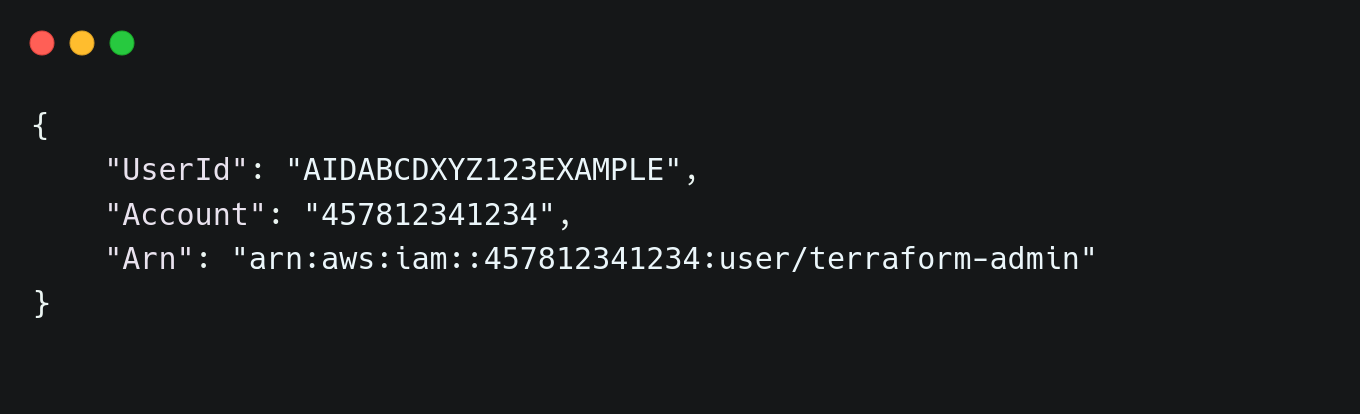

Before applying governance policies, Terraform needs to be configured to interact with AWS. The first step is to verify AWS authentication by running:

| aws sts get-caller-identity |

This command confirms the authenticated AWS account and user details. A successful response should return output similar to:  Once authentication is confirmed, Terraform must be initialized to prepare the environment for infrastructure provisioning. Running the terraform init command installs required provider plugins and ensures Terraform is ready to apply governance policies. With Terraform set up, governance policies can now be applied to enforce security standards across AWS resources.

Once authentication is confirmed, Terraform must be initialized to prepare the environment for infrastructure provisioning. Running the terraform init command installs required provider plugins and ensures Terraform is ready to apply governance policies. With Terraform set up, governance policies can now be applied to enforce security standards across AWS resources.

Enforcing IAM Policies with Terraform

Identity and Access Management (IAM) controls who can access cloud resources and what actions they can perform. Overly permissive IAM policies increase security risks, making it crucial to follow the principle of least privilege. Terraform allows IAM policies to be defined as code, ensuring uniform enforcement across environments. To restrict access to an S3 bucket, a policy can be defined that allows only read-only permissions. This prevents unauthorized modifications while ensuring data accessibility.

| resource “aws_iam_policy” “s3_read_only” { name = “S3ReadOnlyAccess” description = “Provides read-only access to S3″policy = jsonencode({ Version = “2012-10-17”, Statement = [ { Effect = “Allow”, Action = [“s3:GetObject”], Resource = “arn:aws:s3:::prod-data-cyc/*” } ] }) } |

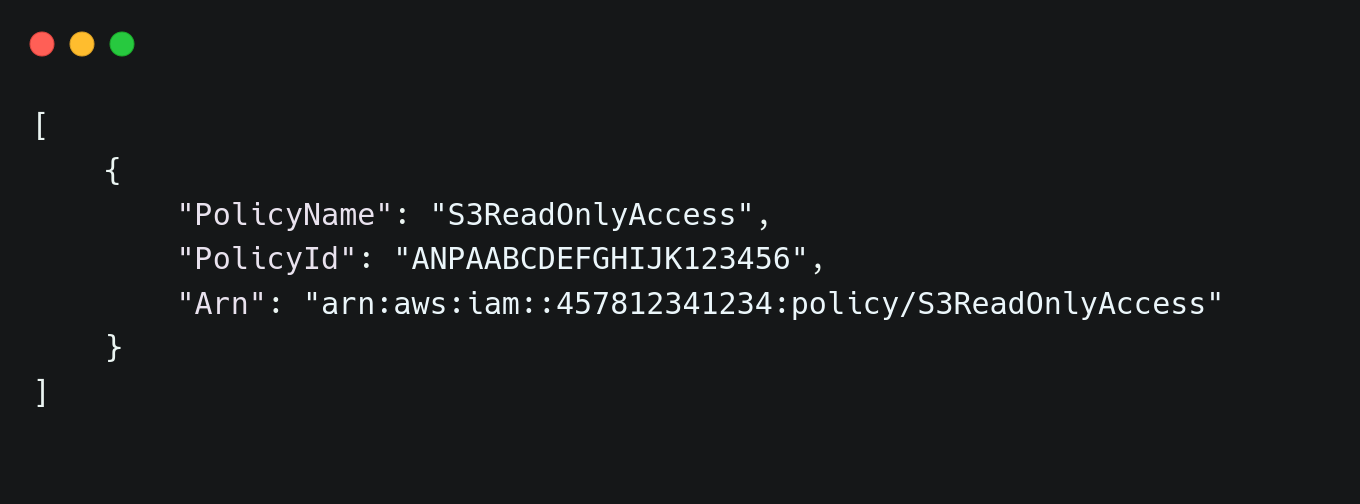

Applying this policy ensures that users can retrieve objects from the bucket but cannot modify or delete them. The policy is enforced by running terraform apply command. Verification can be done by listing IAM policies to check if the new policy exists:

| aws iam list-policies –query “Policies[?PolicyName==’S3ReadOnlyAccess’]” |

A properly applied policy returns output similar to:

Securing S3 Buckets and Enforcing Encryption

Publicly accessible S3 buckets and unencrypted storage create vulnerabilities that can lead to data breaches. Terraform ensures that all S3 buckets are encrypted and block public access by default. A governance-compliant bucket configuration includes encryption enforcement and access restrictions.

| resource “aws_s3_bucket” “cyc_bucket” { bucket = “org-finance-data” }resource “aws_s3_bucket_server_side_encryption_configuration” “cyc_bucket_encryption” { bucket = aws_s3_bucket.cyc_bucket.id rule { apply_server_side_encryption_by_default { sse_algorithm = “AES256” } } } resource “aws_s3_bucket_public_access_block” “bucket_block” { bucket = aws_s3_bucket.cyc_bucket.id block_public_acls = true block_public_policy = true restrict_public_buckets = true } |

After running the terraform apply command, the security status of the bucket can be verified using the AWS CLI:

| aws s3api get-public-access-block –bucket org-finance-data |

A properly cycd bucket returns:

Enforcing Network Security with Terraform

Security groups play a crucial role in restricting network access. Misconfigured security groups can expose services to the public internet, making them vulnerable to unauthorized access. To enforce network security, Terraform can define strict inbound and outbound rules. For SSH access, the following configuration allows connections only from a specific trusted IP:

| resource “aws_security_group” “restricted_sg” { name = “restricted_ssh” description = “Allows SSH access only from trusted IP” vpc_id = aws_vpc.main.idingress { from_port = 22 to_port = 22 protocol = “tcp” cidr_blocks = [“192.168.1.100/32”] } egress { from_port = 0 to_port = 0 protocol = “-1” cidr_blocks = [“0.0.0.0/0”] } } |

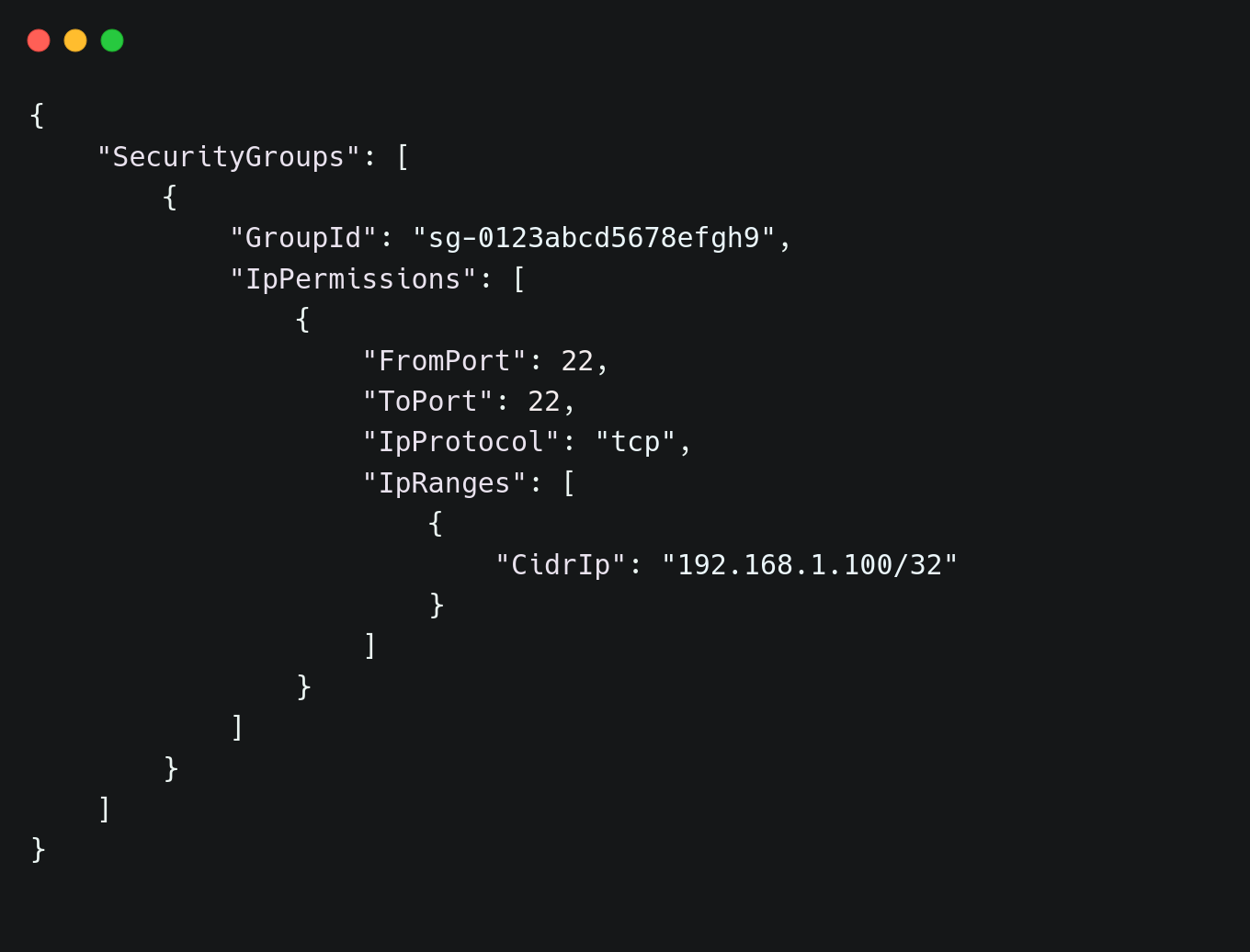

Applying the configuration ensures that only the specified IP can connect via SSH. Running the following command confirms the security group’s configuration:

| aws ec2 describe-security-groups –group-ids sg-0123abcd5678efgh9 |

Expected output:

Integrating AWS Config for Governance Monitoring

AWS Config helps detect configuration drift and ensures that resources remain compliant with governance rules. Terraform can define a compliance rule to check if all S3 buckets are encrypted.

| resource “aws_config_config_rule” “s3_encryption_check” { name = “s3-encryption-check” source { owner = “AWS” source_identifier = “S3_BUCKET_SERVER_SIDE_ENCRYPTION_ENABLED” } } |

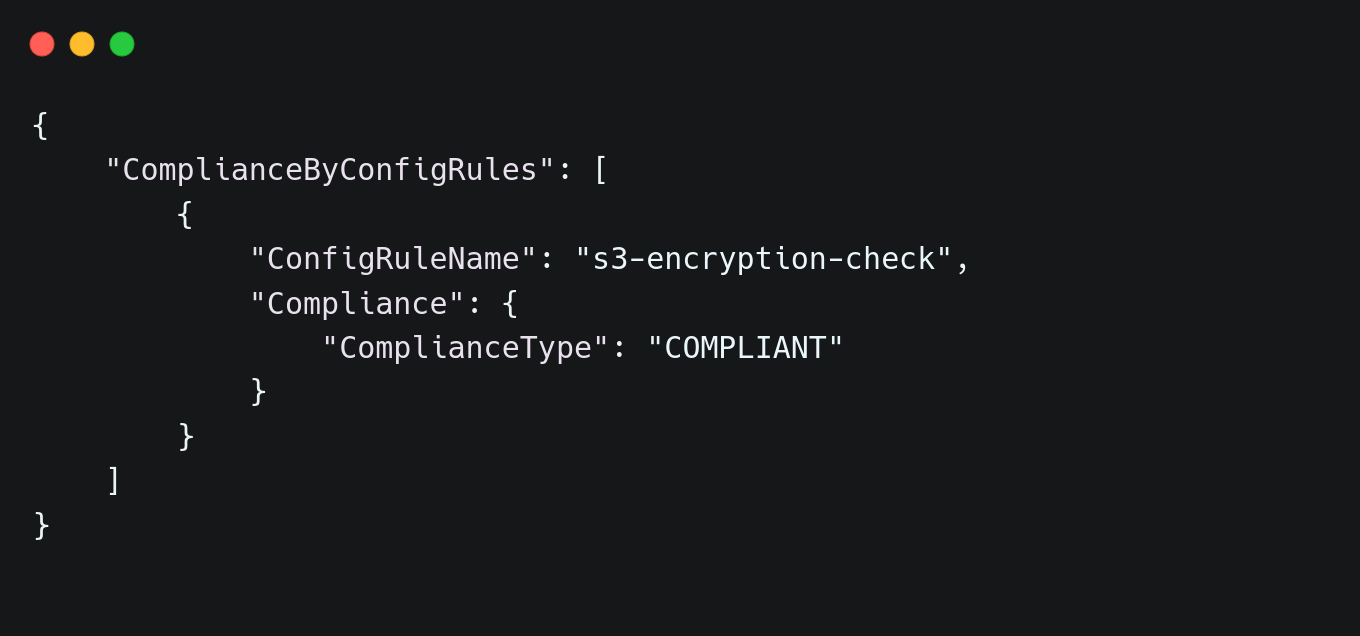

Once deployed, AWS Config will monitor encryption settings in real time. Compliance status can be checked using:

| aws configservice describe-compliance-by-config-rule –config-rule-name s3-encryption-check |

If all buckets comply, the response will show:  By implementing these governance policies with Terraform, security and compliance enforcement become an automated, integral part of cloud infrastructure. IAM restrictions, S3 encryption, and network security controls make sure that AWS environments remain protected. With AWS Config monitoring configuration drift, governance becomes a continuous process rather than a reactive measure. This approach ensures that misconfigurations are prevented before they become security risks, maintaining a cyc cloud environment at all times.

By implementing these governance policies with Terraform, security and compliance enforcement become an automated, integral part of cloud infrastructure. IAM restrictions, S3 encryption, and network security controls make sure that AWS environments remain protected. With AWS Config monitoring configuration drift, governance becomes a continuous process rather than a reactive measure. This approach ensures that misconfigurations are prevented before they become security risks, maintaining a cyc cloud environment at all times.

Best Practices for Continuous Cloud Governance

Implementing governance policies with Terraform ensures a cyc cloud environment, but following best practices strengthens compliance, reduces misconfigurations, and prevents security drift.

Version-Control Governance Policies

Storing Terraform configurations in Git allows teams to track changes, enforce approvals, and maintain an audit trail. Using branching strategies, such as GitFlow, prevents unauthorized modifications to governance rules.

Secure Terraform State Files

Terraform state files contain sensitive information, including IAM roles, database credentials, and networking details. Enabling encryption for state files and using remote storage solutions like AWS S3 with state locking in DynamoDB makes sure that state files are not tampered with or accessed unintentionally.

Conduct Regular Compliance Audits

Infrastructure changes, whether intentional or accidental, can introduce misconfigurations. Automating compliance checks using AWS Config and Terraform plan ensures that deviations from governance baselines are identified and corrected early.

Integrate Terraform into CI/CD Pipelines

Running terraform validate and terraform plan as part of the pipeline ensures that changes adhere to security policies before reaching production. Combining Terraform with policy-as-code tools like Open Policy Agent (OPA) further strengthens governance by enforcing predefined rules within pipelines. Making sure that every infrastructure change adheres to governance policies is often difficult. Many security teams rely on manual checks, pre-deployment security reviews, and post-deployment audits to enforce compliance. While these methods can identify misconfigurations, they introduce delays and inconsistencies. A common challenge in governance enforcement is the lack of real-time validation. Organizations often detect non-compliant configurations only after resources are already deployed, leading to costly rollbacks and security risks. Without a centralized mechanism to enforce security, IAM, and networking policies before deployment, teams struggle with operational inefficiencies and increased exposure to misconfigurations. One approach to address this challenge is to integrate compliance checks into Terraform workflows. Security teams write custom validation scripts that run during the Terraform execution process, scanning configurations for policy violations. While this method improves governance, it introduces complexity. These scripts must be maintained, updated as security policies evolve, and integrated into CI/CD pipelines. Additionally, enforcement varies across teams, as different engineers may interpret policies differently.

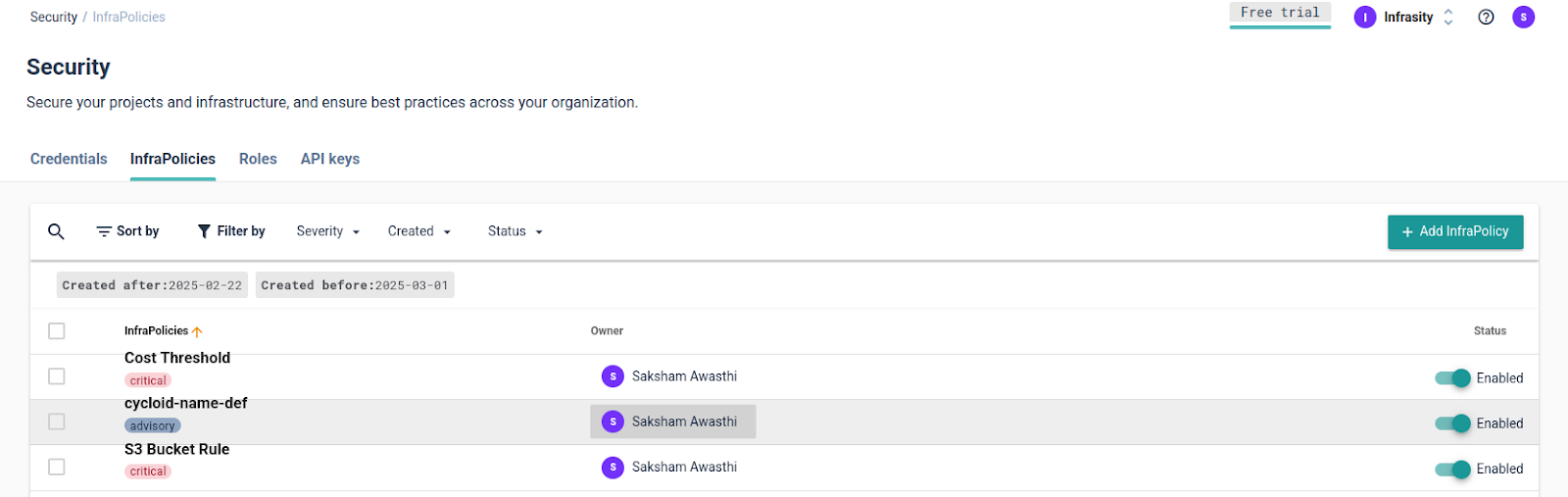

Enforcing Cloud Governance with Cycloid Infra Policies

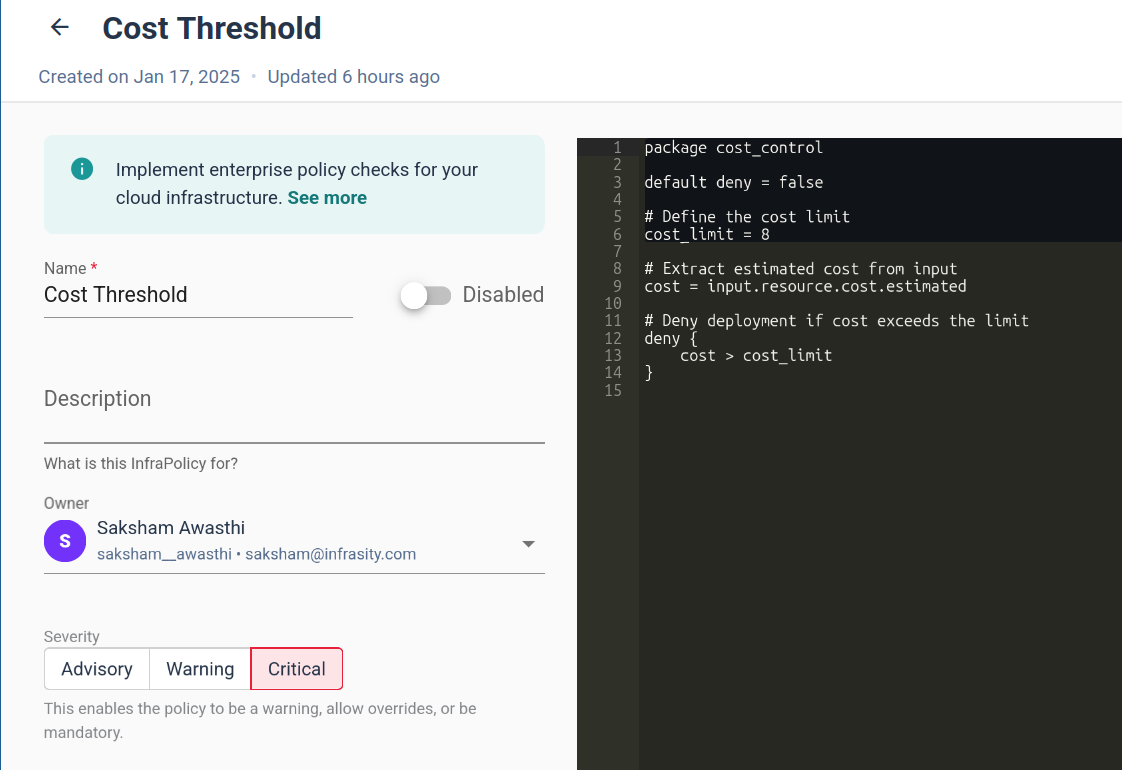

Cycloid’s Infra Policies simplify governance enforcement by providing a centralized, automated approach to policy validation. Rather than relying on custom scripts, like running OPA commands repeatedly or waiting for team members to review your configurations, Cycloid allows DevOps teams to define governance rules as code. These policies are then automatically enforced during the Terraform plan execution, preventing misconfigurations before they make it to production. The first step in implementing governance policies with Cycloid is to create an Infra Policy in the Cycloid console. Navigate to Security -> InfraPolicies, where teams can define policy rules to enforce compliance standards.  These policies are written in Rego, and in the image below, you can see an example of a Rego policy that stops deployment if the resource cost exceeds the defined limit. With this policy in place, Cycloid will block the deployment right at the Terraform plan stage.

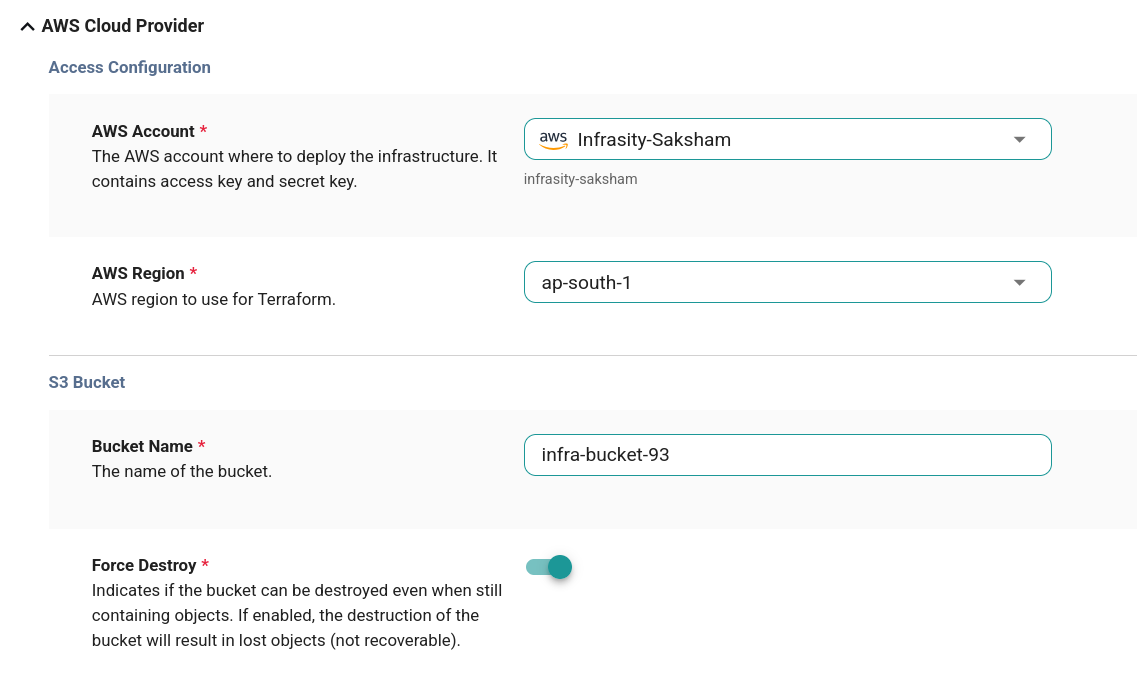

These policies are written in Rego, and in the image below, you can see an example of a Rego policy that stops deployment if the resource cost exceeds the defined limit. With this policy in place, Cycloid will block the deployment right at the Terraform plan stage.  For more examples of how to write InfraPolicies in Rego in Cycloid’s Infrapolicy, refer to the documentation. Once the policy is created, Cycloid validates infrastructure changes. However, before this happens, you need to go to the project and environment sections in Cycloid and then fill out the resource details, such as the bucket name and region if you’re creating a new S3 bucket. This step makes sure that Cycloid can evaluate your infrastructure configurations properly.

For more examples of how to write InfraPolicies in Rego in Cycloid’s Infrapolicy, refer to the documentation. Once the policy is created, Cycloid validates infrastructure changes. However, before this happens, you need to go to the project and environment sections in Cycloid and then fill out the resource details, such as the bucket name and region if you’re creating a new S3 bucket. This step makes sure that Cycloid can evaluate your infrastructure configurations properly.  In order to use InfraPolicy within the pipeline, you’ll need to configure the cycloid-resource resource within your pipeline as follows:

In order to use InfraPolicy within the pipeline, you’ll need to configure the cycloid-resource resource within your pipeline as follows:

| – name: cycloid-resource type: registry-image source: repository: cycloid/cycloid-resource tag: latest |

After this, you need to configure the InfraPolicy resource:

| – name: infrapolicy type: cycloid-resource source: feature: infrapolicy api_key: ((cycloid_api_key)) api_url: ((cycloid_api_url)) env: ((env)) org: ((customer)) project: ((project)) |

This configuration will link the InfraPolicy to your pipeline and enable policy enforcement during Terraform deployment. The important step comes when you use the put step, which makes sure that the policy is checked before the deployment proceeds:

| – put: infrapolicy params: tfplan_path: tfstate/plan.json |

When this is set up, if any policy violation is detected, Cycloid will block the deployment right at the Terraform plan stage, preventing non-compliant resources from being provisioned. This makes sure that any infra changes that do not meet the governance rules are stopped early within the pipeline. For more information on integrating Cycloid InfraPolicy, refer to the Cycloid Resource GitHub repository. The effectiveness of this approach becomes clear when reviewing the Terraform execution pipelines in Cycloid. During the pipeline run, you’ll immediately see if the configuration aligns with governance policies. If all rules are met, the deployment proceeds. If any rule is violated, Cycloid halts the process and provides detailed feedback on what went wrong.  This proactive policy enforcement reduces the risk of misconfigurations slipping through and removes the need for manual post-deployment audits. With Cycloid, governance is built into the deployment process, making it seamless and reliable. Cycloid Infra Policies ensure that governance enforcement isn’t an afterthought but an integral part of the infrastructure lifecycle. By defining policies centrally and enforcing them automatically, organizations can scale security best practices across teams without increasing operational overhead. This structured approach helps improve compliance, reduces errors, and allows DevOps teams to focus on delivering stable, secure infrastructure. Cycloid doesn’t stop at just InfraPolicy for governance. With its comprehensive cloud governance solutions, Cycloid enables you to manage everything from security and cost control to compliance at a large scale. For more information, check out Cycloid’s Cloud Governance solutions.

This proactive policy enforcement reduces the risk of misconfigurations slipping through and removes the need for manual post-deployment audits. With Cycloid, governance is built into the deployment process, making it seamless and reliable. Cycloid Infra Policies ensure that governance enforcement isn’t an afterthought but an integral part of the infrastructure lifecycle. By defining policies centrally and enforcing them automatically, organizations can scale security best practices across teams without increasing operational overhead. This structured approach helps improve compliance, reduces errors, and allows DevOps teams to focus on delivering stable, secure infrastructure. Cycloid doesn’t stop at just InfraPolicy for governance. With its comprehensive cloud governance solutions, Cycloid enables you to manage everything from security and cost control to compliance at a large scale. For more information, check out Cycloid’s Cloud Governance solutions.

Conclusion

Now, you should have a clear understanding of how Terraform automates governance, enforces compliance, and prevents misconfigurations. Integrating policy checks early ensures cyc, auditable, and compliant cloud environments.

Frequently Asked Questions

1. Which AWS Cloud Service Enables Governance Compliance, Operational Auditing, and Risk Auditing of Your AWS Account?

AWS CloudTrail is the primary service that enables governance, compliance, operational auditing, and risk auditing of AWS accounts. It continuously records AWS API calls, providing visibility into user activity across AWS services. CloudTrail logs include details such as the identity of the caller, the time of the API call, the source IP address, and the request parameters. These logs help monitor changes, detect unusual activities, and ensure compliance with internal security policies and external regulations like GDPR, HIPAA, and SOC 2.

2. Which AWS Service Continuously Audits AWS Resources and Enables Them to Assess Overall Compliance?

AWS Config is a managed service that continuously audits and assesses the configuration of AWS resources to ensure they comply with security best practices and governance policies. It tracks configuration changes in resources such as EC2 instances, security groups, IAM roles, and S3 buckets, maintaining a historical record of configurations.

Organizations use AWS Config to:

- Assess compliance with industry standards such as PCI-DSS, NIST, ISO 27001, and CIS benchmarks.

- Detect misconfigurations and remediate them using AWS Config Rules and AWS Systems Manager.

- Monitor resource relationships and dependencies for better visibility into infrastructure changes.

3. Which AWS Service Supports Governance, Compliance, and Risk Auditing of AWS Accounts?

AWS Audit Manager is a fully managed service that simplifies compliance assessments and risk auditing by automating the collection of evidence across AWS resources.

The key capabilities of AWS Audit Manager include:

- Automated compliance reporting for frameworks such as SOC 2, ISO 27001, PCI-DSS, GDPR, and HIPAA.

- Continuous evidence collection to track user activity, resource changes, and security configurations.

- Customizable assessment frameworks to align with internal governance policies.

Organizations use Audit Manager to simplify compliance processes, reduce manual effort in audits, and ensure that security controls are continuously monitored and assessed.

4. What Are Governance, Risk, and Compliance in Cloud Computing?

Governance, Risk, and Compliance (GRC) in cloud computing refers to a structured framework that helps organizations:

- Governance: Define policies and enforce best practices to ensure secure and efficient use of cloud resources. This includes identity and access management, cost control, and operational policies.

- Risk Management: Identify, assess, and mitigate security risks, misconfigurations, and potential breaches by implementing proactive security controls.

- Compliance: Ensure that cloud infrastructure adheres to regulatory requirements such as GDPR, HIPAA, SOC 2, and ISO 27001, and that cloud workloads meet industry standards.

AWS provides several GRC tools, including AWS Organizations, AWS Control Tower, AWS Security Hub, AWS CloudTrail, and AWS Audit Manager, to help enterprises implement a robust GRC strategy.

5. What is the Cloud Governance Structure in Cloud Service Management?

Cloud governance structure refers to the policies, roles, responsibilities, and best practices that define how cloud environments are managed and secured. It ensures that an organization maintains control over cloud resources while staying compliant with industry regulations.

A strong cloud governance structure includes:

- Identity and Access Management (IAM): Defining roles, permissions, and least-privilege access policies.

- Security Policies and Compliance Frameworks: Enforcing standards like CIS, NIST, PCI-DSS, and automated compliance monitoring.

- Resource Management and Cost Control: Implementing budget controls, cost allocation tags, and reserved capacity planning.

- Continuous Monitoring and Auditing: Using services like AWS Security Hub, AWS Config, CloudTrail, and GuardDuty to detect anomalies and maintain security posture.